But will serve as a decent guideline for guessing what the entropy should be. This type of rational does not always work (think of a scenario with hundreds of outcomes all dominated by one occurring \(99.999\%\) of the time). In practice, however, it is enough if the split leads to subsets with a total lower entropy than the original dataset. Ideally, the split should lead to subsets with an entropy of 0.0. A decision tree is a decision support hierarchical model that uses a tree-like model of decisions and their possible consequences, including chance event outcomes, resource costs, and utility.It is one way to display an algorithm that only contains conditional control statements.

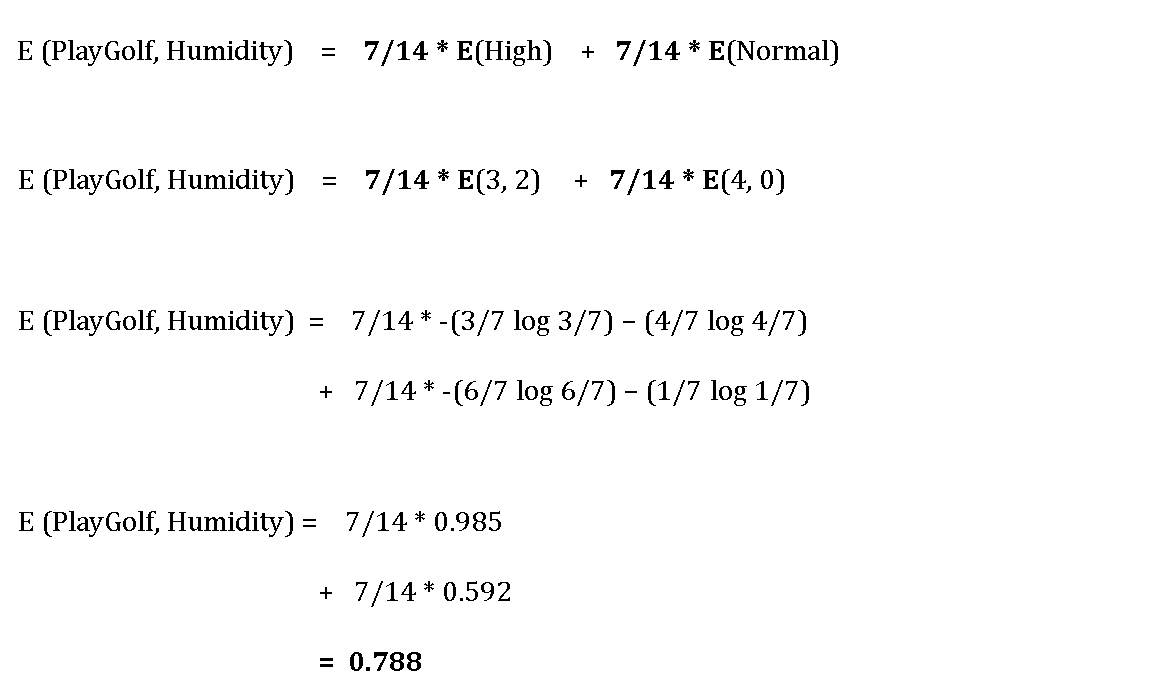

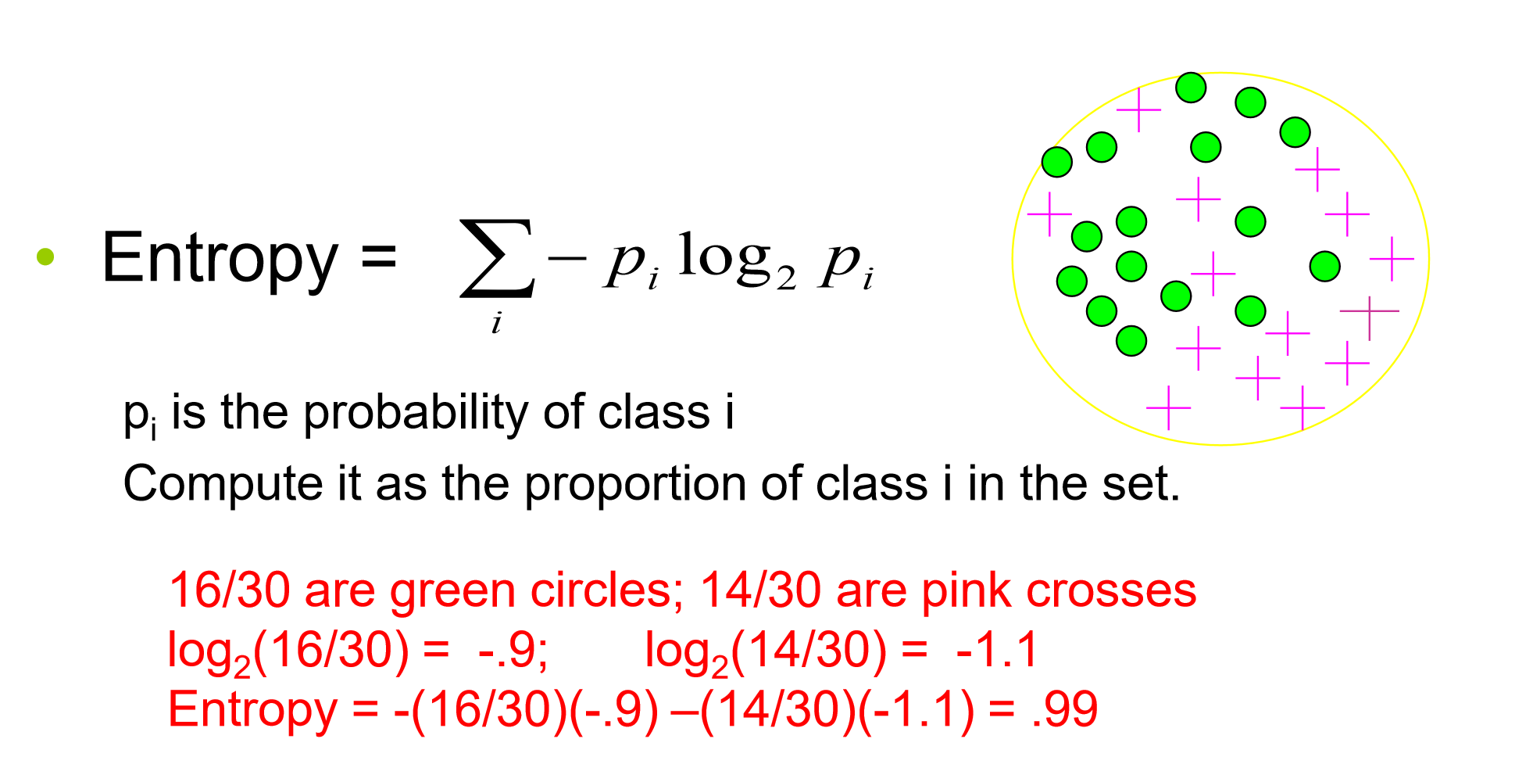

Before discussing the algorithm, please fully read the background information on entropy here. The goal of each split in a decision tree is to move from a confused dataset to two (or more) purer subsets. Quinlan) that relies on the information-theoretic concept of entropy and information gain. We can redefine entropy as the expected number of bits one needs to communicate any result from a distribution. While many decision tree algorithms exist, we will learn a decision tree construction algorithm (ID3 by J. Because the IG is the change in Entropy (of the Original Node) BEFORE and AFTER splitting. The two formulas highly resemble one another, the primary difference between the two is \(x\) vs \(\log_2p(x)\). Thus, you can see that the purpose of getting the Entropy is to help us get the IG. If instead I used a coin for which both sides were tails you could predict the outcome correctly \(100\%\) of the time.Įntropy helps us quantify how uncertain we are of an outcome. For example if I asked you to predict the outcome of a regular fair coin, you have a \(50\%\) chance of being correct. So, basically, the entropy attempts to maximize the mutual information (by constructing a equal probability node) in the decision tree. The higher the entropy, the harder it is to draw any conclusions. The higher the entropy the more unpredictable the outcome is. In simple words, entropy is the measure of how disordered your data is. In machine learning, entropy is a measure of the randomness in the information being processed. Figure 1 shows a sample decision tree for a well-known sample dataset, in which examples are descriptions of weather conditions (Outlook, Humidity, Windy, Temperature), and the target concept is whether these conditions are suitable for playing golf or not (Quinlan, 1986). Essentially how uncertain are we of the value drawn from some distribution. Quantifying Randomness: Entropy, Information Gain and Decision Trees EntropyĮntropy is a measure of expected “surprise”. I do know formula for calculating entropy: H (Y) - (p (yj) log2 (p (yj))) In words, select an attribute and for each value check target attribute value.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed